Design and build AI products and AX, working with founders and teams from concept to shipped.

Interactive

AI product diagnostic

Tell me about your product. It takes about two minutes, and you’ll get a pattern-based diagnosis.

Free · Takes about 5 minutes

Where I work with you

Foundation Work

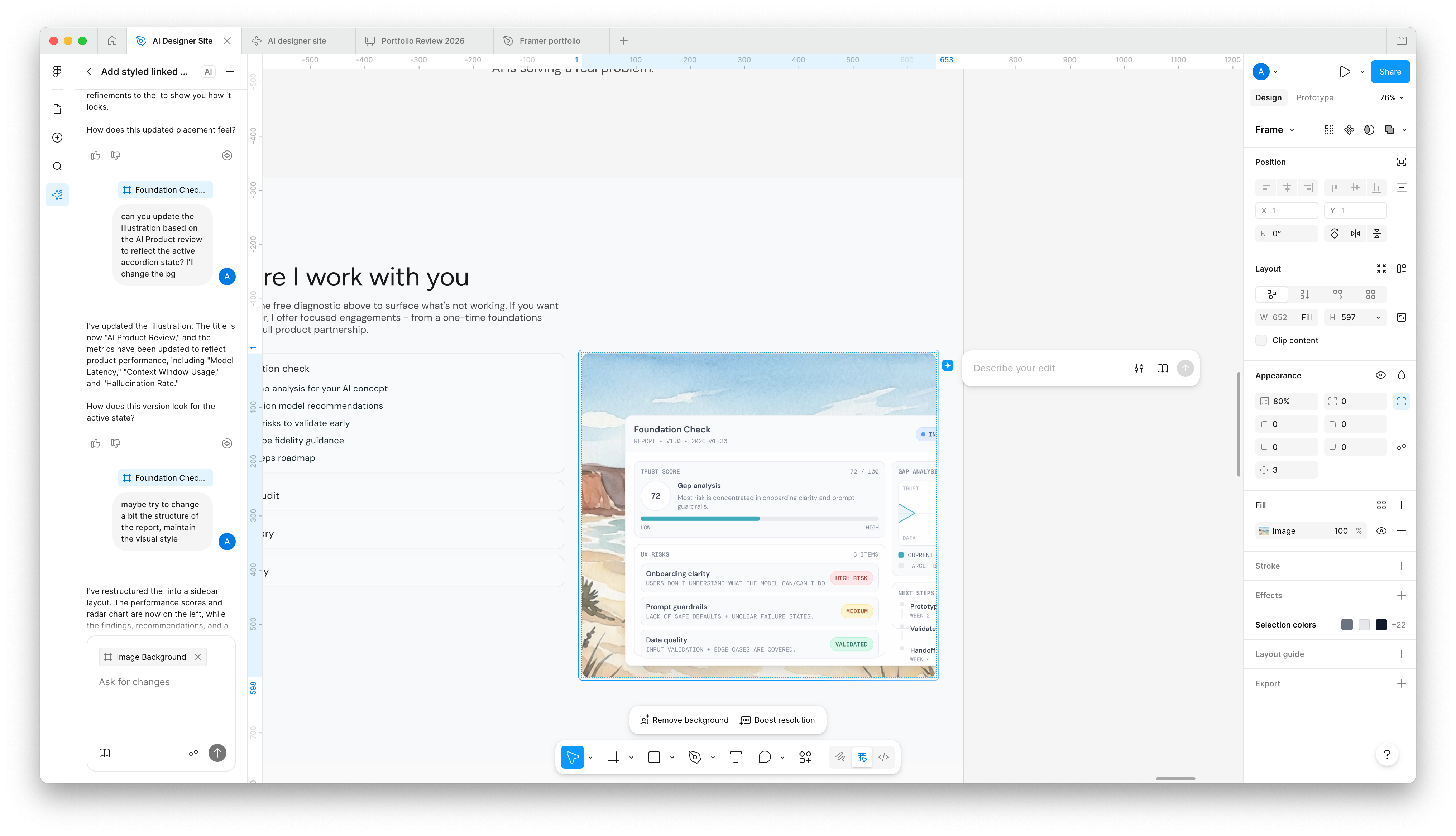

Report • v1.0 • 2026-01-30

Onboarding clarity

Users don't understand what the model can/can't do.

Prompt guardrails

Lack of safe defaults + unclear failure states.

Data quality

Input validation + edge cases are covered.

Trust

UX

Data

Current

Target

Prototype onboarding

Week 2

Validate with users

Handoff + roadmap

Week 4

Selected work

B2B SaaS • 25–30% faster completion

Mapped the AI workflow end to end. Built the transparency and feedback patterns that turned confusion into confidence.

Side Project • High engagement

Side project turned working product. Designed the coaching conversation structure, AI persona, and voice integration — validated with real users.

Ad Platform • Validated approach

Advertisers were leaving plista mid-workflow to use ChatGPT for ad creative. Designed 4 AI features to keep the creative step inside the platform.

What others say

Very talented designer with a deep product experience understanding and tech challenges. I had the privilege to work together on challenging AI product design and website. It was a huge pleasure to Fuse! Highly recommended as a team player with a beautiful personality

Ilan Dray

Independent Designer, worked with Aviad at Convrt

Avi's love for deep user research sets him apart. He has a knack for wanting to understand the what and why, which leads him to designing the right solution. He has a keen eye for detail, and would question the why to make sure that the user is getting the best possible outcome.

Amaka Madueke-Olufayo

Agile Product Manager, worked with Aviad at plista

I wholeheartedly recommend Aviad as an outstanding UX/UI designer. His user-centric approach consistently delivers visually appealing and highly intuitive designs. Aviad's creative, innovative, and forward-thinking mindset shines through in every project, translating user insights into elegant solutions.

Anika Bornschein

Head of Growth Marketing at plista

Good AI gets shipped.

Great AI gets used.

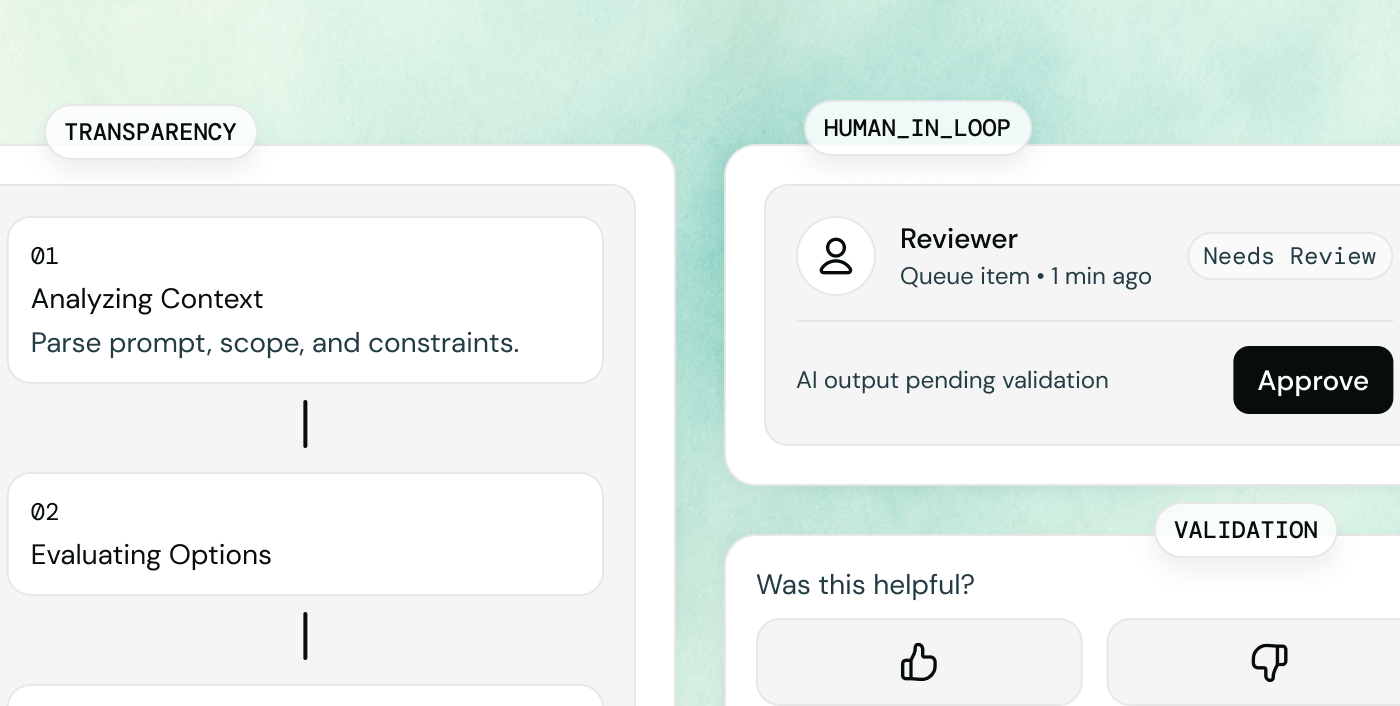

Transparency through conversation

AI shouldn’t be a black box. I design interfaces that explain their reasoning and process.

User Control

Give users agency over AI outputs with explicit actions: undo/redo, edit suggestions, clear state indicators, and a confirm step before finalizing.

Conscious AI decisions

Every feature must solve a real problem, not just exist because it’s possible. Know when to automate and when to empower.

Design for trust, not hype

Building long-term value by prioritizing reliability and user confidence over novelty.

The one in the yellow shirt is me. I'm Aviad :)

I work closely with founders and product teams, figuring out together how AI should actually behave with users. I've been designing B2B SaaS products for over five years, the last few focused on AI.

AI keeps changing, but some of the ways users interact with it keep coming back. The hesitation before trusting an output. The moment they realize they can push back. The expectations they arrive with before they even touch the product. Users are building real mental models for AI now, with understanding and expectations to match. I researched these, and the diagnostic tool on this site is built from that work. The products that fit tend to get used.

What keeps me in it, honestly, is watching how people make sense of something they can't fully see or understand, and how the experience keeps evolving in rapid speed as you read this.

See more of my workRecent articles

You're Not Building an Agent. You're Building a Better Version of Yourself.

A couple of months ago I finished writing a document that felt oddly personal.Thanks for reading!

UX Patterns for AI Products (That Actually Work)

Designing AI products that users actually trust. Six patterns I keep seeing across different AI products and features.

The part I skipped (and missed)

For the past month I’ve been building my site with Claude Code, Cursor, and a bunch of agents.

Let's talk

Available for collaboration, consulting, and making great products.

Berlin-based, remote-friendly.